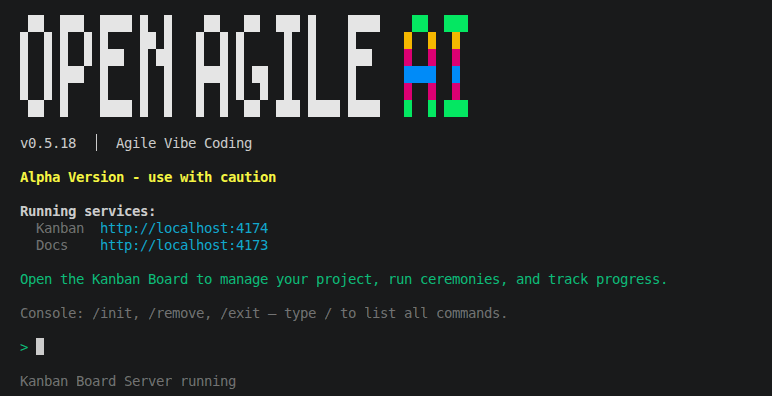

Try It Now — Alpha Release

Install

npm install -g @openagile/avc@betaLaunch in your project folder

cd my-project && avcThis creates a .avc/ directory and opens the Kanban board at localhost:4174. Add your LLM API key in Settings — OpenAI, Anthropic, Gemini, Xiaomi MiMo, or local models (LM Studio/Ollama).

Run Sponsor Call

Describe your project idea. The tool generates a structured project brief with scope, tech stack, and constraints.

Run Sprint Planning

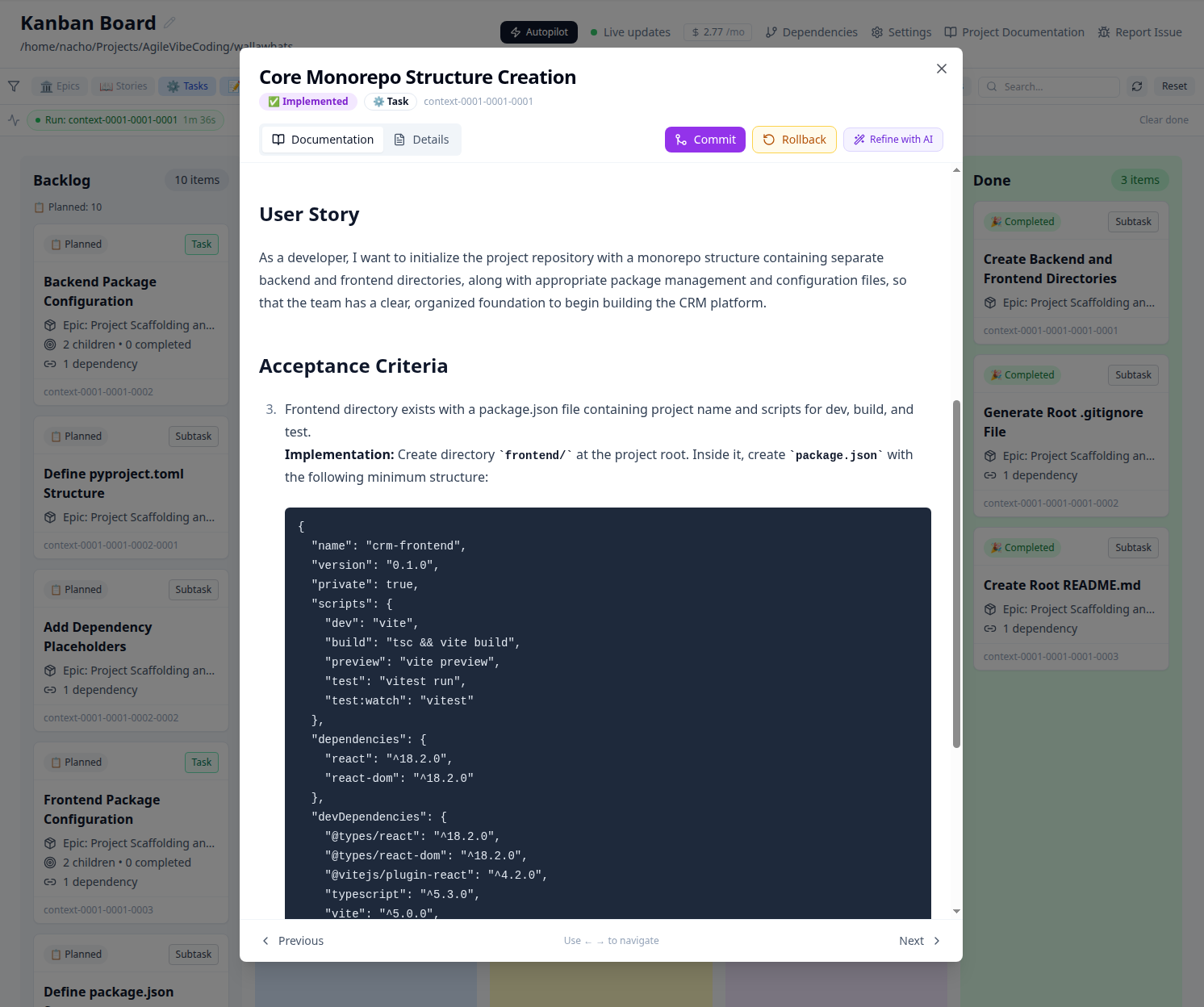

The project decomposes into epics, stories, and tasks — each with acceptance criteria, scoped context, and dependency maps. Explore them in the Kanban board.

Review what was generated

Open the Kanban board at localhost:4174. You should see the full epic → story hierarchy with structured specs, acceptance criteria, and dependencies. This is what OpenAgile.AI does best today.